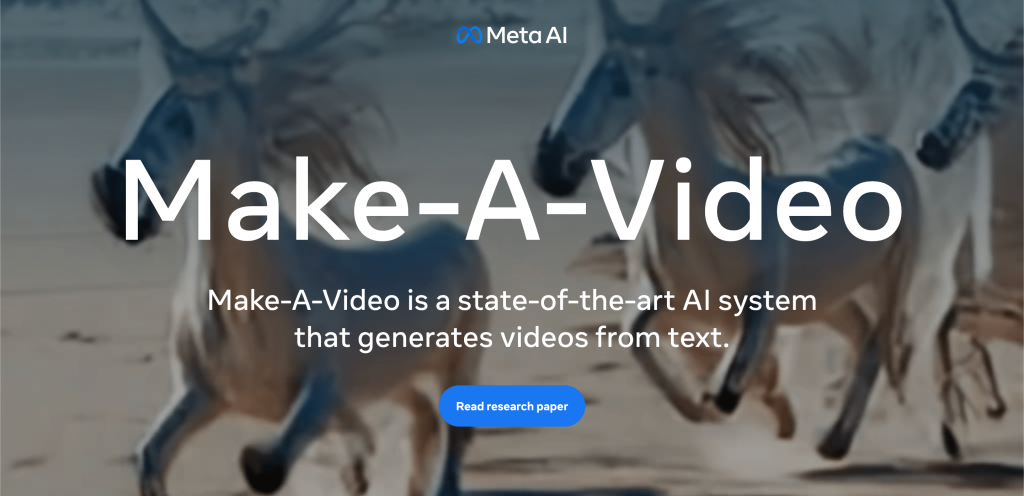

On his Facebook page, Mark Zuckerberg announced a video generator AI.

The Make-A-Video AI generates short mute video clips based on a narrative text similar to the Dall-E image generator.

The system processes image captions to learn what the world is like and how they commonly describe it. It also processes unlabeled videos to learn how the world moves.

All of these videos were generated by an AI system that our team at Meta built. We call it Make-A-Video. You give it a text description and it creates a video for you. We gave it descriptions like: “a teddy bear painting a self-portrait”, “a baby sloth with a knitted hat trying to figure out a laptop”, “a spaceship landing on mars”, and “a robot surfing a wave in the ocean”. This is pretty amazing progress. It’s much harder to generate video than photos because beyond correctly generating each pixel, the system also has to predict how they’ll change over time. Make-A-Video solves this by adding a layer of unsupervised learning that enables the system to understand motion in the physical world and apply it to traditional text-to-image generation.

The AI can do the following:

- Generate videos based on a text description;

- Add motion to static images;

- Provide a new look to an existing video.

There are certain video limitations though:

- Less than five seconds in length;

- 16 frames per second;

- Dimensions: 768×768.

Here’s a video it generated:

Follow the link to submit an access request: https://forms.gle/dZ4kudbydHPgfzQ48.